Well… whose ratings should a wine drinker pay attention to? Or, stated with a tad more more grammatical correctness (warning: sounding-like-douche-bag-potential alert!), To Whose Ratings Should A Wine Drinker Pay More Attention?

An American Association of Wine Economists (AAWE) working paper with that tile was just released, though, interestingly, it doesn’t actually answer the question. I will answer it, in a few minutes anyway, but not before torturing you with exposition and report dissection first. Because, well, I’m really just not that nice of a guy.

Despite the bait-and-switch title, the paper starts with a fascinating premise: given that ratings for the same wines vary between professional wine critics (called “experts” in the paper’s lingo), is there an established expert whose ratings correlate closely with those of the general wine-drinkin’ public?

Turns out, there is one – at least,there is one out of the three expert sources that the paper used.

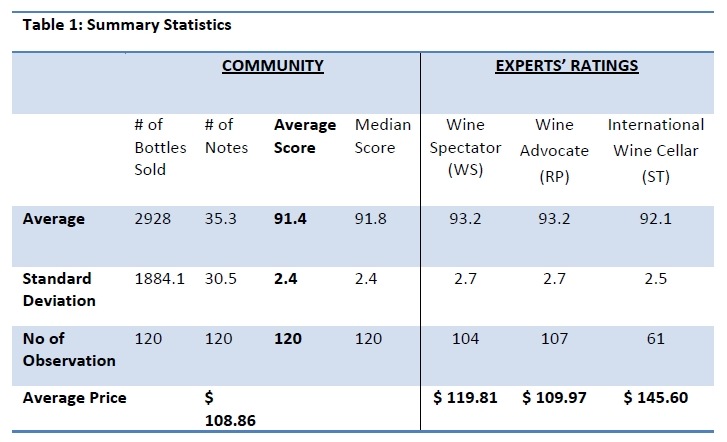

The paper’s authors, Omer Gokcekus and Dennis Nottebaum (no, I do not know how to pronounce those), chose to examine ratings/scores of 120 Bordeaux wines from the 2005 vintage. The voice of the people was played by the scores for those wines as recorded in Cellar Tracker, subsets of which were then compared with the scores for the same wines as reported by three pro wine critic sources. Big-time influencer Robert Parker (via The Wine Advocate) was included, as well as Wine Spectator, so they covered the 1.5 most influential wine critics in the U.S. The third included was Stephan Tanzer’s International Wine Cellar, though to be honest I’ve no idea why they included that last one. Just kidding, Stephen!

Anyway… It’s important to note the results were aggregated, and this makes them a tad misleading because the same wines were not compared between the three pro critics and Cellar Tracker – a subset of the wines were compared (CT to RP, CT to WS, and CT to ST). These were not the same wines (or the same amount of wines) in each case, so while there will be some wines in the group that were compared against all four ratings sources, there will also be some wines that were only compared between Cellar Tracker and one of the pro sources. Got it? Good!

Overlooking that minor cavil, the results are pretty darn fascinating…

What does the paper reveal to us in terms of insights gleaned from the study?

- Cellar Tracker users are stern reviewers, giving lower ratings overall than the pros. This will come as a surprise to roughly 0% of all regular CT users.

- The experts agreed with each other (had a higher score correlation) than they did with the general public. Anyone who has ever followed wine scores will be nodding their heads at that one, too.

- Surprisingly, wine prices correlated most strongly with the median community score than with expert scores. I say “surprisingly” not because I think that pro wine critics’ scores would be the more likely price drivers, but because this result is probably what you’d expect to see in a free market economy – and the wine sales business in the U.S. in particular is more like a totalitarian regime than a free market. Ok, sorry, couldn’t resist that…

- The pro whose ratings most closely correlated with the general public was… Stephen Tanzer! Damn, too bad nobody from the U.S. reads his stuff. Just kidding, Stephen!

Of course, one could make a serious argument that Cellar Tracker users don’t necessarily represent the average wine drinker and are skewed more towards hard-core consumers, but I think it’s as close an approximation as the AAWE is likely to get with a database of scores that can be compared with those of the pros. If you are a CT user, though, this paper suggests that Tanzer is your man; the paper also suggests that you might hate Robert Parker:

“…these regular wine drinkers resent Robert Parker’s influence ‐or shall we say hegemony over the wine community ‐ and systematically challenge his ratings by either giving higher scores to the wines with low RP ratings and lower scores to the wines with high RP ratings.”

Yeah, well, that’s one explanation for why there’s low correlation between RP’s ratings and the CT ratings for the same wines, anyway. But is it likely that there’s a huge RP-resentment conspiracy, or even general negative-vibe infecting millions of CT users and subconsciously affecting their impressions of a given wine? Maybe, but it seems far more likely (and logical) that the average CT user just doesn’t like those wines as much as RP? I know how I’d vote. Seemed like a stretch to me.

So… whose ratings should wine drinkers pay attention to? Aside from mine, I mean?

Here’s an answer, hewn not from any scientific study, but from the “truthiness” of my gut (into which I’ve poured thousands of wines by now): THEIR OWN and EACH OTHER’S.

Now, wouldn’t that be something to write about?

Or did I just do that?

Cheers!

Comments

25 responses to “Whose Ratings Should A Wine Drinker Pay Attention To?”

Great piece! Looks like the data are less conclusive on account of that important caveat, but one huge qualitative difference between critics and consumers/communities not mentioned therein is tasting vs drinking. Despite being well covered territory, that topic still doesn't get enough airtime.

Consumers don't taste wine, they drink it. BIG difference.

The analysis of anything is so far removed from the experience of enjoyment – in fact, as our shared experiences at perspective tastings attests – it infringes on enjoyment. So, if consumers are looking for quidance on buying wine to drink, then, yeah – totally agree with you – listen to others who have drunk the wine, not merely walked it through the paces of analysis and rating.

In this regard, communities like CT (though far from perfect) offer a distinct advantage over critics' numbers.

Cheers!

Thanks, Steve – AWESOME points about that difference, and it is pretty big. I have started to find a sort of Happy Place with that in my own reviews. Lately I have been opening up several bottles and spending time with them critically and then doing the ratings judgment stuff on each. THEN I pick faves from the bunch to have with dinner, and those become candidates for longer-form reviews or features on the blog. And plenty of times my fave is NOT the highest-scoring wine. So far, I am really enjoying the change in format as it acts as kind of a bridge between the two opposing shores you described. Cheers!

Who pays for these "studies" and why can't the money be put to use to benefit the human race instead?

Ah, Thomas, but they *do* benefit the human race. It's just a tiny, tiny special-interest group subset of the human race! :)

Oh, I see. Am I in that group?

If so, I want my share of the money to go to studying how long it takes one drop of water to get inside a grape skin to wreak its havoc. If there's any money leftover after that study, I want it to go to a study that disproves all previous studies. If there's still some money left over, I want it to go for some wine and a party, but do not invite those who do studies; I get my 8 hours of sleep each night without their help, thank you ;)

Oh, and once again, I agree with Keith, even if he seems to place too much weight on such studies…

Thomas – I am all for the party sans study-executors. :)

Good morning,

Great post Joe. That's another great topic of discussion. I'm very much in agreement with Winethropology's comment. As always in the wine world, the challenge is comparing apple and oranges. Without similar scoring sheets and similar judgment methodology, you can only end up with different notes. It would actually be extraordinary to get similar results using different processes. This new published paper confirms it one more time.

Cheers,

Olivier

Thanks, Olivier!

One alternative explanation for why community ratings have a closer correlation to price than the professional ratings is that non-professionals may be more likely to have their judgment influenced by the price. Perhaps the non-professionals are also less likely to have sampled the wines in a blind setting. At least one of the three professional sources, Wine Spectator, tastes these wines blind, and they at least claim not to alter the rating once the wines are revealed.

Keith – great points. The paper actually discusses that briefly in its conclusion.

I have been getting emailed links to those American Association of Wine Economists (AAWE) papers for a while, and I have to admit this was the first one of I have read in full. Suffice it to say I am neither an economist nor a statistician, but I think they are putting way too much emphasis on CT scores versus actually trying to read and analyze the text. Of course for purposes of the analysis they want to do I understand that the scores are there, are ample and are tempting. And at some level I am just totally tickled that I have given them some fun data to play with!

@cellartracker – Hey, thanks for chiming in! I agree that there is boku geeky fun in playing with the data. But also agree that it needs to be taken with a grain of salt. Ok, maybe even an entire shaker. :) Cheers!

My new dog and best friend, Gus, is a big fan of yours, but he wants to know why you never write about dog treats.

Steve – I guess I am just not food-motivated enough :). If it is any consolation to him, neither is my new dog Bruno!

Wine is an experience, a feeling, a perception. The drinker has his/her own preferences simply because the first tingle and the first whiff triggers unique memories and feelings. How do the raters and authorities wish to rate and standardize those feelings?

Tom – In my view, they cannot. It is simply impossible to do it (which I suspect is your point :). That is why I am such a strong proponent of people getting off their duffs and learning about their own preferences when it comes to wine. Cheers!

I think you missed the point slightly on the Parker stuff, the strange thing isn't that people are scoring the wines he rates highest lower, it is that for the lower rated wines (around 90) the CT community is actually scoring them higher than Parker. This may be due to the bunching effect you get when averaging a bunch of people together and then comparing them to one person (highs not as high, lows not as low), but apparently we don't see the same thing with WS and Tanzer. I'd agree it's unlikely people are twisting their mustaches and trying to stick it to Parker.

I think you're also dismissing the price thing a little too easily. My biggest takeaway from this is that CT ratings for this subset of Bordeaux were very highly correlated with price while Parker and WS were much less so. Parker in particular, given that he apparently does not taste these blind, appears to be doing a fine job of removing any prestige/price prejudice from his ratings. In fact, it's possible that the statistically significant difference between Parker and WS and CT is partially or mostly due to this difference around price (without running a regression there's no way to know for sure), in which case it would appear that Parker/WS are more reliable than the CT community in this case, since I think we can all agree that while quality/price is correlated, it's not at the .7 level.

We're on record as being fans of blind tasting for comparative purposes and the wisdom of crowds in our Tasting Panels, but this study, to me, scores points for the ability of some to taste non-blind effectively and the dangers of crowds. Of course, as was pointed out in the study and the comments, the CT ratings are almost all coming from non-blind tastings. So perhaps, non-blind+crowds=trouble, at least for price/prestige regions like Bordeaux?

Hi Phil – I think the fact that the "crowd" are paying for the wines AND not tasting blind for the most part, that's the key thing because we know scientifically that cognitive dissonance comes into play there for products that are purchased by a consumer with their own money and then that the consumer doesn't like. It could certainly explain the disparity you point out with the crowd rating wines higher than Parker…

Fabuloso! Now we have others telling us how, and how much, to feel. I'll prefer my own counsel, though. Cheers!

Bill – I don't think anyone is telling you how to feel. In my case, I'm often telling people how a wine made *me* feel, which is a completely different thing altogether.

Hi Joe,

Thanks very much for mentioning our paper here. I am glad to see that it has been received with quite some interest. So thank you all for your comments.

There are a few issues that have been raised here that I'd like to address. The first is the question of taste. Of course taste is highly subjective and hard (some would say impossible) to quantify. But the fact of the matter is that nowadays quite a number of different ratings exists which do just that, quantify taste. And if you go to your local wine store you will see that they are displayed in ways that will surely have an impact on buyers.

As we make clear in the first sentence of the article, "wine is an experiential good; until one buys and opens a bottle the content and quality remain unknown". Assume that you were planning to buy a bottle of 2005 Bordeaux for a special occasion and you had already managed to narrow your selection down to, say, 2 bottles. In an ideal world you would simply taste them both and go for the one you prefer, right? But we're speaking about rather high-priced wines here and maybe while you'd be willing to pay $100 for a bottle for this special occasion, you wouldn't be able/willing to pay $200 just to make sure you choose the one that you like more. What do you do in this instance? Other than asking a friend or the storeowner for their opinion (Do they have similar tastes? Have they had this specific wine? Do you have friends that drink $100 Bordeaux?), I suppose most people would pay attention to the ratings listed conveniently right next to the wines. And this is the premise for our study. So we're looking at a second-best option here, since the first-best (experience) might simply not be available.

As to the point raised by Eric, we do actually mention texts/reviews in our study and observe that they differ quite profoundly between critics in terms of their description of a particular wine. This is merely anecdotal evidence, of course. But I think it somewhat supports our argument that we make in the article. And yes, for me as an economist the availability of data is just too tempting… ;) So thank you for providing that, Eric.

Last point, Thomas asked who paid for our study and I can at least for myself answer that question: I did. With my free time. Sounds odd that you would use your free time to analyze data on wine ratings. But well, that brings us back to the data fetish… In any case, I would be happy for someone to sponsor in-depth case studies on each of the 120 Bordeaux in our sample. ;)

Thanks again for your interest & all the best

Dennis

Thanks, Dennis!

"if you go to your local wine store you will see that they are displayed in ways that will surely have an impact on buyers"

– Understood – in fact, I've written quite a bit about this in the past.

"while you'd be willing to pay $100 for a bottle for this special occasion, you wouldn't be able/willing to pay $200 just to make sure you choose the one that you like more…"

– While we know that people are turning more & more to social means for wine recommendations, we also know that those scores help to move wines, so I think you rightly point out that your study has merit in that context. I can tell you that a few years ago, I *was* that guy at the store!

"Sounds odd that you would use your free time to analyze data on wine ratings"

– Not to me, and probably not to any wine blogger. These are labors of love :).

Cheers!

"I can tell you that a few years ago, I *was* that guy at the store!"

Same here… ;)

Cheers!

:)